A Comprehensive Overview of Artificial Intelligence: History, Models, Developers, Current Status, and Future Developments

Introduction

Artificial Intelligence (AI) refers to the simulation of human intelligence in machines designed to think and act like humans. This rapidly evolving field encompasses a variety of technologies, methodologies, and applications aimed at creating systems capable of performing tasks that typically require human intelligence. These tasks include learning, reasoning, problem-solving, perception, language understanding, and interaction.

History of Artificial Intelligence

Early Beginnings

- 1950s: The concept of AI can be traced back to the mid-20th century. Alan Turing, a British mathematician, and logician laid the groundwork with his seminal paper “Computing Machinery and Intelligence” (1950), in which he proposed the Turing Test as a measure of machine intelligence.

- 1956: The term “Artificial Intelligence” was officially coined at the Dartmouth Conference by John McCarthy, Marvin Minsky, Nathaniel Rochester, and Claude Shannon. This event is considered the birth of AI as a field of research.

The Formative Years

- 1950s-1960s: Early AI research focused on problem-solving and symbolic methods. Programs such as the Logic Theorist (1955) by Allen Newell and Herbert A. Simon and the General Problem Solver (1957) showcased the potential of AI.

- 1970s-1980s: AI research experienced periods of optimism and setbacks, known as AI winters, due to limitations in processing power and unrealistic expectations. Nevertheless, significant progress was made in areas like expert systems, which used knowledge-based approaches to solve specific domain problems.

The Rise of Machine Learning

- 1990s-2000s: The resurgence of AI was driven by advances in machine learning, a subset of AI focused on developing algorithms that allow computers to learn from data. Key developments included the rise of neural networks and support vector machines.

- 2010s: The advent of deep learning, which involves neural networks with many layers, revolutionized AI. Landmark achievements such as Google’s DeepMind’s AlphaGo defeating the world champion Go player in 2016 highlighted the potential of deep learning.

Models in Artificial Intelligence

Machine Learning Models

- Supervised Learning: Involves training a model on labeled data. Common algorithms include linear regression, decision trees, and support vector machines.

- Unsupervised Learning: Deals with unlabeled data and aims to identify patterns. Clustering algorithms like K-means and hierarchical clustering are typical examples.

- Reinforcement Learning: Involves training agents to make a sequence of decisions by rewarding them for desirable actions. Q-learning and Deep Q Networks (DQNs) are prominent techniques.

Neural Networks and Deep Learning

- Artificial Neural Networks (ANNs): Inspired by the human brain, ANNs consist of interconnected neurons organized in layers. They are the foundation of deep learning.

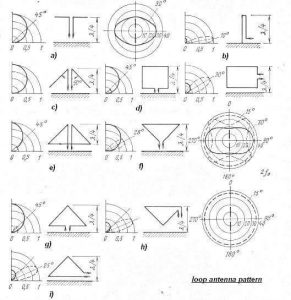

- Convolutional Neural Networks (CNNs): Primarily used for image and video recognition, CNNs utilize convolutional layers to automatically and adaptively learn spatial hierarchies of features.

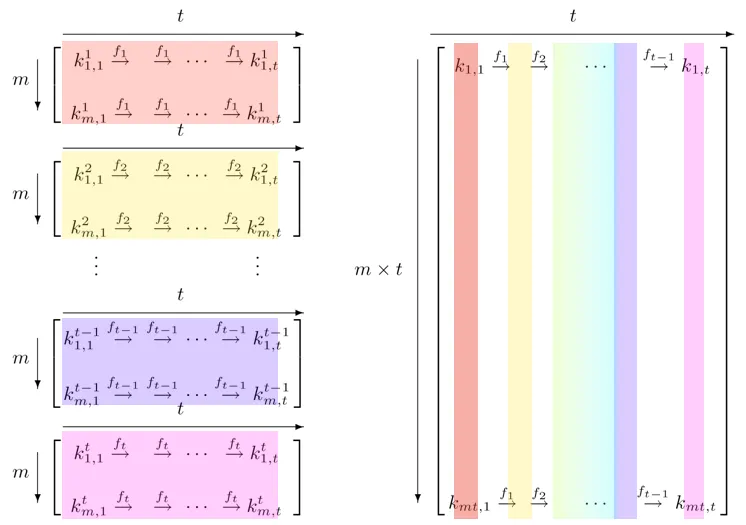

- Recurrent Neural Networks (RNNs): Designed for sequential data, RNNs are widely used in language modeling and time series prediction. Long Short-Term Memory (LSTM) networks are a popular variant.

Other AI Models

- Natural Language Processing (NLP): Focuses on the interaction between computers and humans through natural language. Models like BERT (Bidirectional Encoder Representations from Transformers) and GPT (Generative Pre-trained Transformer) have achieved state-of-the-art results in various NLP tasks.

- Generative Adversarial Networks (GANs): Consist of two neural networks, a generator and a discriminator, that compete with each other to produce increasingly realistic data. GANs have been used for image synthesis and data augmentation.

Key Developers and Contributors

Pioneers

- Alan Turing: Often referred to as the father of computer science and AI, Turing’s work laid the foundational concepts for modern computing and AI.

- John McCarthy: Coined the term “Artificial Intelligence” and made significant contributions to AI research, including the development of the Lisp programming language.

Modern Contributors

- Geoffrey Hinton: A leading figure in deep learning, Hinton’s work on backpropagation and neural networks has been instrumental in the resurgence of AI.

- Yoshua Bengio: Known for his contributions to deep learning and AI, Bengio has significantly advanced our understanding of neural networks and representation learning.

- Andrew Ng: A prominent AI researcher and educator, Ng co-founded Google Brain and contributed to the development of large-scale machine learning algorithms.

Current Status of AI

Applications

- Healthcare: AI is being used for diagnostics, personalized medicine, and drug discovery. Models like IBM Watson have shown potential in analyzing medical data and providing insights.

- Autonomous Vehicles: Companies like Tesla, Waymo, and Uber are developing self-driving cars using AI to interpret sensor data and navigate complex environments.

- Finance: AI algorithms are employed for algorithmic trading, fraud detection, and customer service automation through chatbots.

Ethical and Societal Considerations

- Bias and Fairness: AI systems can inadvertently perpetuate biases present in training data, leading to unfair outcomes. Efforts are underway to develop fair and transparent AI models.

- Privacy: The use of AI in surveillance and data analysis raises significant privacy concerns. Striking a balance between innovation and privacy protection is a critical challenge.

- Job Displacement: AI’s automation capabilities can lead to job displacement in various sectors. Addressing the economic and social impact of AI-driven automation is essential for a sustainable future.

Future Developments in AI

Advanced Research Areas

- Explainable AI (XAI): As AI systems become more complex, the need for transparency and interpretability grows. XAI aims to make AI decision-making processes understandable to humans.

- General AI: Moving beyond narrow AI, which excels in specific tasks, towards Artificial General Intelligence (AGI) capable of performing any intellectual task a human can do remains a long-term goal.

- Quantum AI: The integration of quantum computing and AI promises to solve problems currently intractable for classical computers, potentially revolutionizing fields like cryptography, optimization, and materials science.

Potential Impact

- Healthcare: AI could lead to breakthroughs in early disease detection, personalized treatment plans, and efficient healthcare delivery systems.

- Climate Change: AI can optimize energy usage, model climate patterns, and develop innovative solutions for environmental sustainability.

- Education: AI-driven personalized learning systems could revolutionize education by providing tailored content and support to students based on their individual needs and learning styles.

Conclusion

Artificial Intelligence has come a long way since its inception, evolving through various phases and achieving remarkable milestones. The field continues to grow at an unprecedented pace, with ongoing research and development aimed at solving some of the world’s most complex problems. While AI presents significant opportunities, it also poses ethical and societal challenges that require careful consideration and proactive measures. As we look to the future, the continued advancement of AI holds the promise of transformative impact across multiple domains, shaping the way we live, work, and interact with the world.

Post Comment